Spikey school¶

Introduction to the hardware system¶

For an introduction to the Spikey neuromorphic system, its neuron and synapse models, its topology and its configuration space, see section 2 in [Pfeil2013]. Detailed information about the analog implementation of the neuron, STP and STDP models are given in Figure 17 in [Indiveri2011], [Schemmel2007] and [Schemmel2006], respectively. The digital parts of the chip architecture are thoroughly documented in [Gruebl2007Phd]. The following paragraph will briefly summarize the features of the Spikey system.

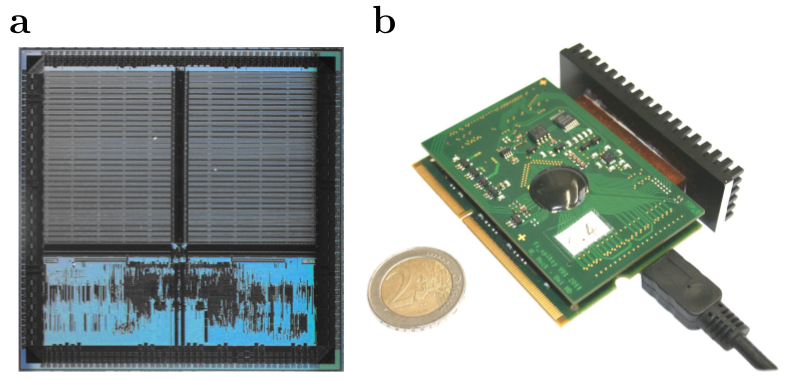

Figure 57: A photograph of the Spikey neuromorphic chip (a) and system (b), respectively. In (b) the Spikey chip is covered by a black seal. Adapted from [Pfeil2013].¶

The Spikey chip is fabricated in a 180nm CMOS process with die size \(5\,mm \times 5\,mm\). While the communication to the host computer is mostly established by digital circuits, the spiking neural network is mostly implemented with analog circuits. Compared to biological real-time, networks on the Spikey chip are accelerated, because time constants on the chip are approximately \(10^4\) times smaller than in biology. Each neuron and synapse has its physical implementation on the chip. The total number of 384 neurons are split into two blocks of 192 neurons with 256 synapses each. Each line of synapses in these blocks, i.e. 192 synapses, is driven by a line driver that can be configured to receive input from external spike sources (e.g., generated on the host computer), from on-chip neurons in the same block or from on-chip neurons in the adjacent block.

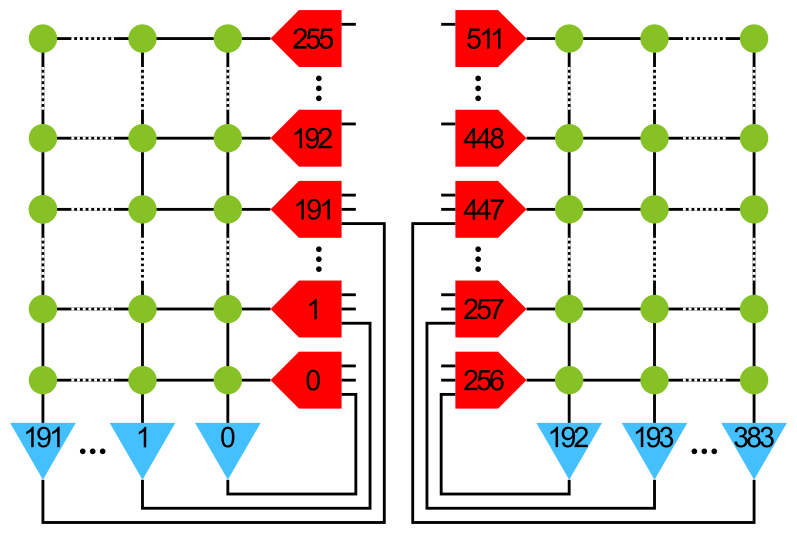

Figure 58: Numbering of neurons (blue) and line drivers (red). Here, only connections within the same block of neurons are shown. For connections between the blocks see the following table. The weight of each synapse (green) can be configured with a 4-bit resolution, i.e., 16 different values.¶

Neuron assignment to line drivers:

Line driver ID |

Neuron ID left block |

Neuron ID right block |

Line driver ID |

Neuron ID left block |

Neuron ID right block |

|---|---|---|---|---|---|

0 |

0 |

193 |

256 |

192 |

1 |

1 |

1 |

192 |

257 |

193 |

0 |

2 |

2 |

195 |

258 |

194 |

3 |

3 |

3 |

194 |

259 |

195 |

2 |

… |

… |

… |

… |

… |

… |

190 |

190 |

383 |

446 |

382 |

191 |

191 |

191 |

382 |

447 |

383 |

190 |

192 |

ext only |

ext |

448 |

ext only |

ext only only |

… |

… |

… |

… |

… |

… |

255 |

ext only |

ext |

511 |

ext only |

ext only only |

The last (upper) 64 line drivers receive external inputs only and hence external spike sources line drivers are allocated from top to bottom.

The hardware implementations of neurons and synapses are inspired by the leaky integrate-and-fire neuron model using synapses with exponentially decaying or alpha-shaped conductances (PyNN neuron model IF_facets_hardware1).

While the leak conductance (PyNN neuron model parameter g_leak) and (absolute) refractory period (tau_refrac) is individually configurable for each neuron,

the resting (v_rest), reset (v_reset), threshold (v_thresh), excitatory reversal (clamped to ground) and inhibitory reversal potentials (e_rev_I) are shared among neurons (see [Pfeil2013] for details).

Line drivers generate the time course of postsynaptic conductances (PSCs) for a single row of synapses.

Among other parameters the rise time, fall time and amplitude of PSCs can be modulated for each line driver (for details see Lesson 1: Exploring the parameter space and Figure 4.8 and 4.9 in [Petkov2012]).

Each synapse stores a configurable 4-bit weight.

A synapse can be turned off, if its weight is set to zero.

During network emulations, spike times can be recorded from all neurons in parallel. In contrast, membrane potential recordings are limited to a single, but arbitrary, neuron.

Network models for the Spikey hardware are described and controlled by PyNN (version 0.6; for an introduction to PyNN see Building models). Due to the fact that PyNN is a Python package we recommend to have a look at a Python tutorial. For efficient data analysis and visualization with Python see tutorials for Numpy, Matplotlib and Scipy.

Short-term plasticity (STP)¶

Synaptic efficacy has been shown to change with presynaptic activity on the time scale of hundred milliseconds [ScholarpediaShortTermPlasticity]. The hardware implementation of such short-term plasticity is close to the model introduced by [Tsodyks1997]. However, on hardware STP can either be depressing or facilitating, but not mixtures of both as allowed by the original model. For details about the hardware implementation and emulation results, see [Schemmel2007] and Lesson 5: Short-term plasticity, respectively.

Spike-timing dependent plasticity (STDP)¶

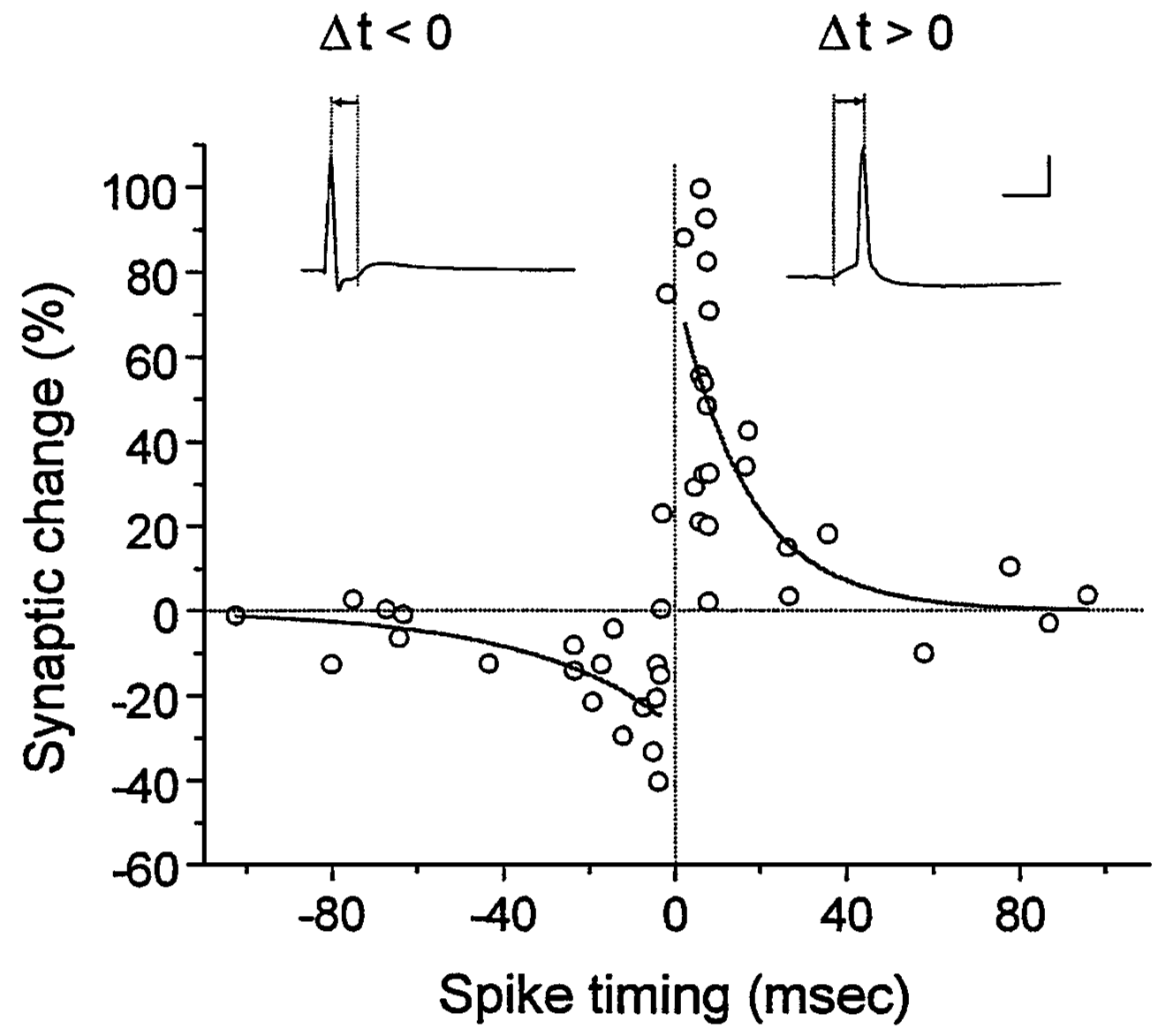

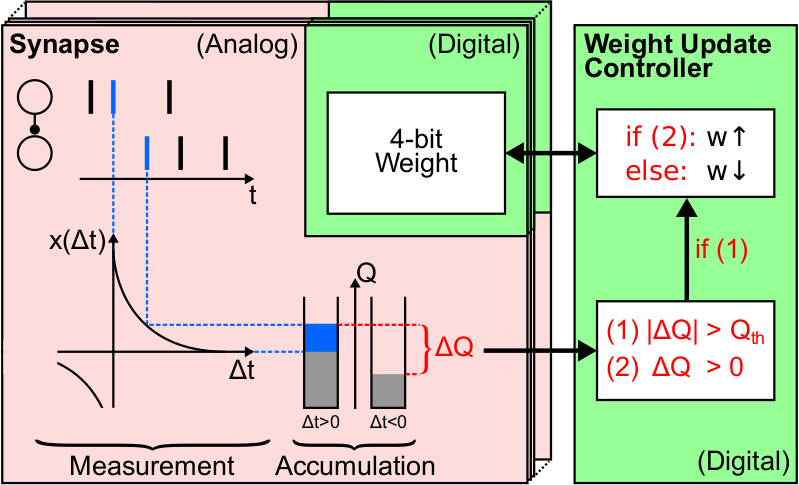

Long-term (seconds to years) modification of synaptic weights has been shown to depend on the precise timing of spikes [ScholarpediaSTDP]. Weights are usually increased, if the postsynaptic neuron fires after the presynaptic one, and decreased for the opposite case. Typically, synaptic weights change the more the smaller this temporal correlation is. On hardware temporal correlations between pre- and postsynaptic neurons are measured and stored locally in each synapse. Then a global mechanism sequentially evaluates these measurements and updates the synaptic weight according to a programmable look-up table.

Figure 59: Spike-timing dependent plasticity measured in biological tissue (rat hippocampal neurons; adapted from [Bi2001]).¶

Figure 60: Hardware implementation of STDP (adapted from [Pfeil2015Phd]).¶

For a detailed description of the hardware implementation, measurements of single synapses and functional networks, see [Schemmel2006], [Pfeil2012STDP] and [Pfeil2013STDP], respectively. Note that on hardware the reduced symmetric nearest neighbor spike pairing scheme is used (see Figure 7C in [Morrison2008]).

Introduction to the lessons¶

Note that all emulation results shown in the following lessons were recorded from the Spikey chip 666 and may be different for other chips.

In particular, network, neuron and synapse parameters may have to be adjusted for proper network activity.

In the following we use pynn as an acronym for pyNN.hardware.stage1.

Lesson 1: Exploring the parameter space¶

In this lesson, we explore the parameter space of neurons and synapses on the Spikey chip.

First, the parameters of neurons are investigated. As an example, we measure the firing rate of a neuron in dependence on its leak conductance. The neuron is stimulated by spikes from a Poisson process.

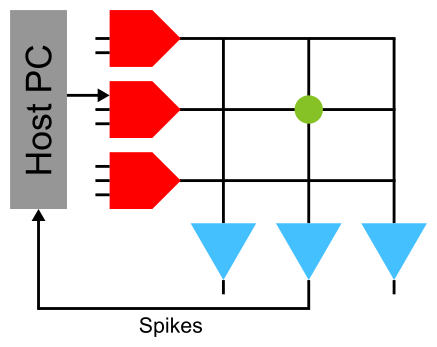

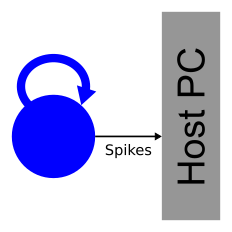

Figure 61: A neuron is stimulated using an external spike source and the spike times of the neuron are recorded. Synapses with weight zero are not drawn.¶

To average out fixed-pattern noise (see Lesson 2: Fixed-pattern and temporal noise:) in both the synapse and neuron circuits, a population of neurons is stimulated by a population of spike sources.

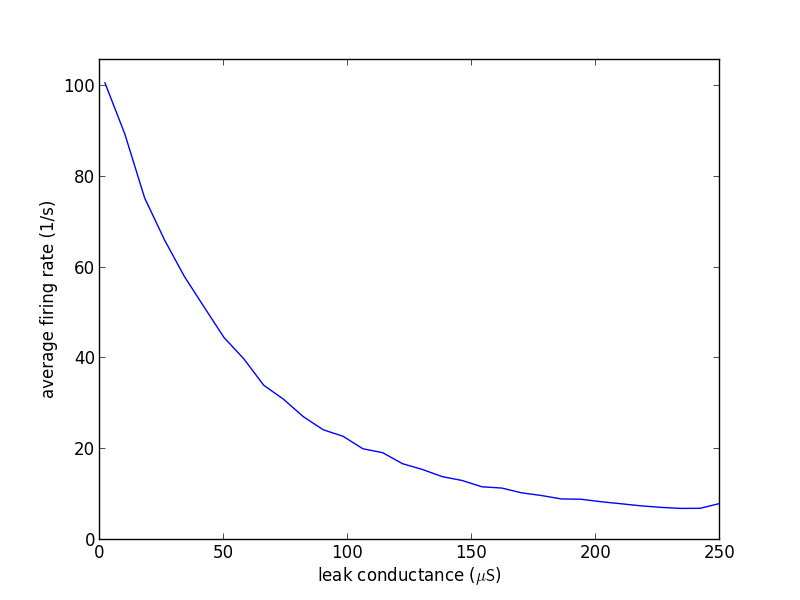

Figure 62: The firing rate of the neuron in dependence on its leak conductance \(g_{leak}\) (source code lesson 1-1).¶

By increasing the leak conductance of the neuron its membrane potential is pulled towards the resting potential and hence the firing rate of the neuron decreases.

Tasks:

Measure and plot the dependency of the firing rate on other neuron parameters (for parameters, see Introduction to the hardware system). Interpret these dependencies qualitatively?

Calibrate the firing rate of the neuron to a reasonable target rate by adjusting its leak conductance.

Replace the input from a Poisson process (

pynn.SpikeSourcePoisson) by a regular input with the same rate (tipp: usepynn.SpikeSourceArray). What do you observe?

Second, synaptic parameters are investigated. A neuron is stimulated with a single spike and its membrane potential is recorded. To average out noise on the membrane potential (mostly caused by the readout process) we stimulate the neuron with a regular spike train and calculate the spike-triggered average of these so-called excitatory postsynaptic potentials (EPSPs).

Figure 63: Postsynaptic potentials measured in biological tissue (from motoneurons; adapted from [Coombs1955]).¶

Figure 64: A neuron is stimulated using a single synapse and its membrane potential is recorded. The parameters of synapses are adjusted row-wise in the line drivers (red).¶

Figure 65: Single and averaged excitatory postsynaptic potentials (source code lesson 1-2).¶

Tasks:

Vary the parameters

drvifallanddrvioutof the synapse line drivers and investigate their effect on the shape of EPSPs (tipp: usepynn.Projection.setDrvifallFactorsandpynn.Projection.setDrvioutFactorsto scale these parameters, respectively).Compare the EPSPs between excitatory to inhibitory synapses.

Compare the shape of the first EPSPs. They may differ due to the initial loading of capacities (e.g. wires). Discard an appropriate number of EPSPs at the beginning of the emulation to avoid these distortions.

Lesson 2: Fixed-pattern and temporal noise:¶

In this lesson, we investigate fixed-pattern and temporal noise in the analog neuron and synapse circuits of the Spikey hardware system.

In contrast to simulations with software, emulations on analog neuromorphic hardware are subject to noise. We distinguish between fixed-pattern and temporal noise. Fixed-pattern noise are variations of neuron and synapse parameters across the chip due to imperfections in the production process. Calibration can reduce this noise, because it is approximately constant over time. In contrast, temporal noise, including electronic noise and temperature fluctuations, causes different results in consecutive emulations of identical networks.

Tasks:

Investigate the fixed-pattern noise across neurons: Record the firing rates of several neurons for the default value of the leak conductance (see Figure 61 and 62; tipp: record all neurons at once). Interpret the distribution of these firing rates by plotting a histogram and calculating the variance.

Investigate the fixed-pattern noise across synapses: For a single neuron, vary the row of the stimulating synapse and calculate the variance of the area under the EPSPs across synapses (see Figure 64 and 65).

Estimate the ratio between fixed-pattern and temporal noise: Measure the reproducibility of emulations, i.e., the error of firing rates across identical consecutive trials. Use the network and parameters from the first task and measure this error for each neuron. Compare the variance of the firing rates across trials (averaged across neurons) to that one across neurons in a single trial. Extra: How does the reproducibility depend on the duration of emulations and the number of consecutive trials?

Lesson 3: Feedforward networks¶

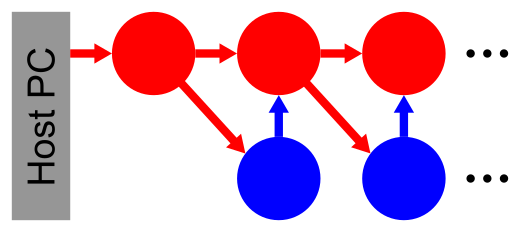

In this lesson, we learn how to setup networks on the Spikey system. In the last lessons neurons received their input exclusively from external spike sources. Now, we introduce connections between hardware neurons. As an example, a synfire chain with feedforward inhibition is implemented (for details, see [Pfeil2013]). Populations of neurons represent the links in this chain and are unidirectionally interconnected. After stimulating the first neuron population, network activity propagates along the chain, whereby neurons of the same population fire synchronously.

Figure 66: Schematic of a synfire chain with feedforward inhibition. Excitatory and inhibitory neurons are coloured red and blue, respectively.¶

In PyNN connections between hardware neurons can be treated like connections from external spike sources to hardware neurons.

Note that synaptic weights on hardware can be configured with integer values in the range [0..15].

To stay within the range of synaptic weights supported by the hardware, it is useful to specify weights in the domain of these integer values and translate them into biological parameter domain by multiplying them with pynn.minExcWeight() or pynn.minInhWeight() for excitatory and inhibitory connections, respectively.

Synaptic weights that are not multiples of pynn.minExcWeight() and pynn.minInhWeight() for excitatory and inhibitory synapses, respectively, are stochastically rounded to integer values.

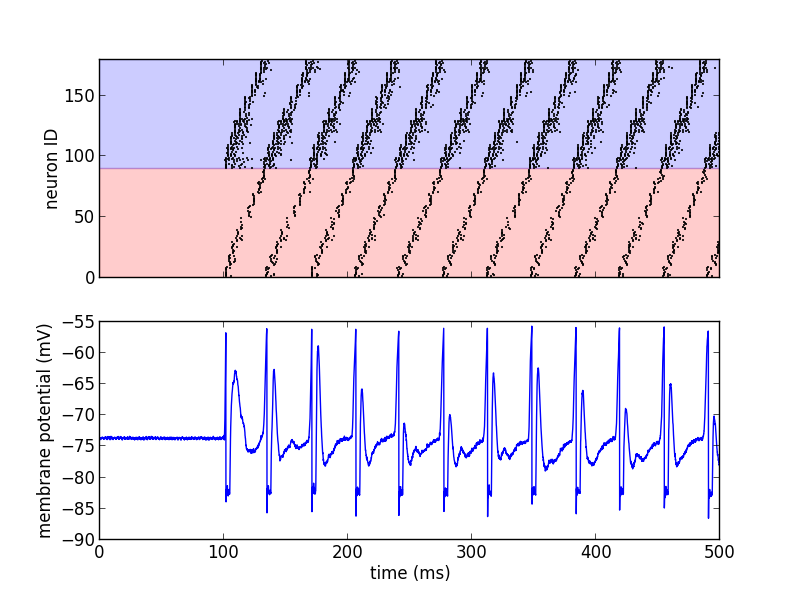

Figure 67: Emulated network activity of the synfire chain including the membrane potential of the neuron with ID=0 (source code lesson 3). The same color code as in the schematic is used.¶

Tasks:

Adjust the synaptic weights to obtain a loop of network activity that lasts for at least 1000 seconds.

Reduce the number of neurons in each population and maximize the period of network activity. Which hardware feature limits the minimal number of neurons in each population?

Open the loop and increase the number of neurons in each population to obtain a stable propagation of network activity. Systematically vary the initial stimulus (number of spikes and standard deviation of their timing) to investigate the filter properties of this network (for orientation, see [Kremkow2010] and [Pfeil2013]).

Lesson 4: Recurrent networks¶

In this lesson, a recurrent network of neurons with sparse and random connections is investigated. To avoid self-reinforcing network activity that may arise from excitatory connections, we choose connections between neurons to be inhibitory with weight \(w\). Each neuron is configured to have a fixed number \(K\) of presynaptic partners that are randomly drawn from all hardware neurons (for details, see [Pfeil2015]). Neurons are stimulated by a constant current that drives the neuron above threshold in the absence of external input. Technically this current is implemented by setting the resting potential above the firing threshold of the neuron. The absence of external stimulation cancels the transfer of spikes to the system and accelerates the experiment execution. In addition, once configured this recurrent network runs hypothetically forever.

Figure 68: Schematic of the recurrent network. Neurons within a population of inhibitory neurons are randomly and sparsely connected to each other.¶

Figure 69: Network activity of a recurrent network with \(K=15\) (source code lesson 4).¶

Tasks:

For each neuron, measure the firing rate and plot it against the coefficient of variation (CVs) of inter-spike intervals. Interpret the correlation between firing rates and CVs.

Measure the dependence of the firing rates and CVs on \(w\) and \(K\). Calibrate the network towards a firing rate of approximately \(25 \frac{1}{s}\). Extra: And maximize the average CV.

Extra: Calculate the pair-wise correlation between randomly drawn spike trains of different neurons in the network (consider using http://neuralensemble.org/elephant/ to calculate the correlation). Investigate the dependence of the average correlation on \(w\) and \(K\) (tipp: use 100 pairs of neurons to calculate the average). Use these results to minimize correlations in the activity of the network.

Lesson 5: Short-term plasticity¶

In this lesson, the hardware implementation of Short-term plasticity (STP) is investigated. The network description is similar to that shown in Figure 64, but with STP enabled in the synapse line driver.

Figure 70: Depressing STP measured in biological tissue (adapted from [Tsodyks1997]).¶

Figure 71: Depressing STP on the Spikey neuromorphic system (source code lesson 5).¶

The weight of the synapse decreases with each presynaptic spike and recovers after the absence of presynaptic input.

Tasks:

Compare the membrane potential to a network with STP disabled.

Configure STP to be facilitating.

Lesson 6: Long-term plasticity¶

In this lesson, we investigate Spike-timing dependent plasticity (STDP) on hardware. An external input is connected to the postsynaptic neuron and STDP is enabled for this plastic synapse (P). To adjust the timing between pre- and postsynaptic spikes, several external inputs with static synaptic weights (S) are used to elicit a spike in the postsynaptic neuron. By measuring \(d\), the timing between the pre- and postsynaptic spike \(\Delta t\) can be adjusted on the host computer (see spike timing in Figure 72 B).

Figure 72: Network configuration (A) and spike timing (B) to measure STDP on the Spikey chip (source code lesson 6).¶

This network can be used to measure the dependency of synaptic changes \(\Delta w\) on the timing \(\Delta t\) (cf. Figure 59). The inverse of the number \(N\) of spike pairs that are required to elicit a weight update represent the change of the synaptic weight.

Tasks:

Configure the hardware neurons and synapses such that each volley of presynaptic spikes evokes exactly a single postsynaptic spike. Due to the intrinsic adaptation of hardware neurons consider discarding the first few spike pairs for the plastic synapse.

Plot \(\frac{1}{N}\) over \(\Delta t\) and compare your results to Figure 59.

Extra: Investigate the results of the last task for varying rows and columns of synapses.

Lesson 7: Functional networks¶

Other network examples¶

References¶

- Bi2001

Bi et al. (2001). Synaptic modification by correlated activity: Hebb’s postulate revisited. Annu. Rev. Neurosci. 24, 139–66.

- Coombs1955

Coombs et al. (1955). Excitatory synaptic action in motoneurones. The Journal of Physiology 130 (2), 374–395.

- Gruebl2007Phd

Grübl, A. (2007). VLSI Implementation of a Spiking Neural Network. PhD thesis, Heidelberg University. HD-KIP 07-10.

- Indiveri2011

Indiveri et al. (2011). Neuromorphic silicon neuron circuits. Front. Neurosci. 5 (73).

- Kremkow2010

Kremkow et al. (2010). Gating of signal propagation in spiking neural networks by balanced and correlated excitation and inhibition. J. Neurosci. 30 (47), 15760–15768.

- Morrison2008

Morrison et al. (2008). Phenomenological models of synaptic plasticity based on spike-timing. Biol. Cybern. 98, 459–478.

- Petkov2012

Petkov, V. (2012). Toward Belief Propagation on Neuromorphic Hardware. Diploma thesis, Heidelberg University. HD-KIP 12-23.

- Pfeil2012STDP

Pfeil et al. (2012). Is a 4-bit synaptic weight resolution enough? – constraints on enabling spike-timing dependent plasticity in neuromorphic hardware. Front. Neurosci. 6:90.

- Pfeil2013(1,2,3,4,5)

Pfeil et al. (2013). Six networks on a universal neuromorphic computing substrate. Front. Neurosci. 7 (11).

- Pfeil2013STDP

Pfeil et al. (2013). Neuromorphic learning towards nano second precision. In Neural Networks (IJCNN), The 2013 International Joint Conference on, pp. 1–5. IEEE Press.

- Pfeil2015

Pfeil et al. (2013). The effect of heterogeneity on decorrelation mechanisms in spiking neural networks: a neuromorphic-hardware study. Submitted.

- Pfeil2015Phd

Pfeil (2015). Exploring the potential of brain-inspired computing. Doctoral thesis, Heidelberg University.

- Schemmel2007(1,2)

Schemmel et al. (2007). Modeling synaptic plasticity within networks of highly accelerated I&F neurons. In Proceedings of the 2007 International Symposium on Circuits and Systems (ISCAS), New Orleans, pp. 3367–3370. IEEE Press.

- Schemmel2006(1,2)

Schemmel et al. (2006). Implementing synaptic plasticity in a VLSI spiking neural network model. In Proceedings of the 2006 International Joint Conference on Neural Networks (IJCNN), Vancouver, pp. 1–6. IEEE Press.

- ScholarpediaShortTermPlasticity

Misha Tsodyks and Si Wu (2013) Short-term synaptic plasticity. Scholarpedia, 8(10):3153.

- ScholarpediaSTDP

Jesper Sjöström and Wulfram Gerstner (2010) Spike-timing dependent plasticity. Scholarpedia, 5(2):1362.

- Tsodyks1997(1,2)

Tsodyks et al. (1997). The neural code between neocortical pyramidal neurons depends on neurotransmitter release probability. Proc. Natl. Acad. Sci. USA 94, 719–723.